This is an adaptation of the original article by Svyat Login published on DOU.ua, a Ukrainian IT community and blog.

Mobile app security testing is an important part of product development that ensures protection against malware and hackers. The majority of Android apps require registration. Users enter sensitive data, like emails, phone numbers, and payment details. The data shared with an app is stored on a company’s server, and without proper security, hackers can access it.

Hopefully, our mobile app security testing tutorial will help you prevent it. The tutorial is based on testing top 10 vulnerabilities defined by OWASP will be useful for QA engineers and developers.

Mobile app security vulnerabilities by OWASP

The OWASP TOP 10 list of vulnerabilities in mobile applications includes:

Mobile and web applications have at least a half of security issues in common, as both app types work the same way, sharing client-server architecture.

A native application is a client for mobile devices, while a browser is a client for the web. Every request from a mobile device and a browser is transmitted to the (same) server. As a result, half of the techniques used for detecting web vulnerabilities are suitable for searching gaps in native applications too.

Let’s start with a set of tools we need for a basic application security check. Later, we’ll explain how to apply this toolkit for Android app analysis. iOS, however, has a slightly different nature, that’s a subject for a different blog post.

How to Test Mobile App Security

So how to do security testing for mobile apps? We’ll need several things for that.

Test environment, e.i. an app. We can use the DIVA app. It contains the most common vulnerabilities of mobile applications, and you can practice finding them.

Mobile device. Take the Genymotion emulator or a real one. Just remember that the device should be rooted. Otherwise, you won’t be able to penetrate.

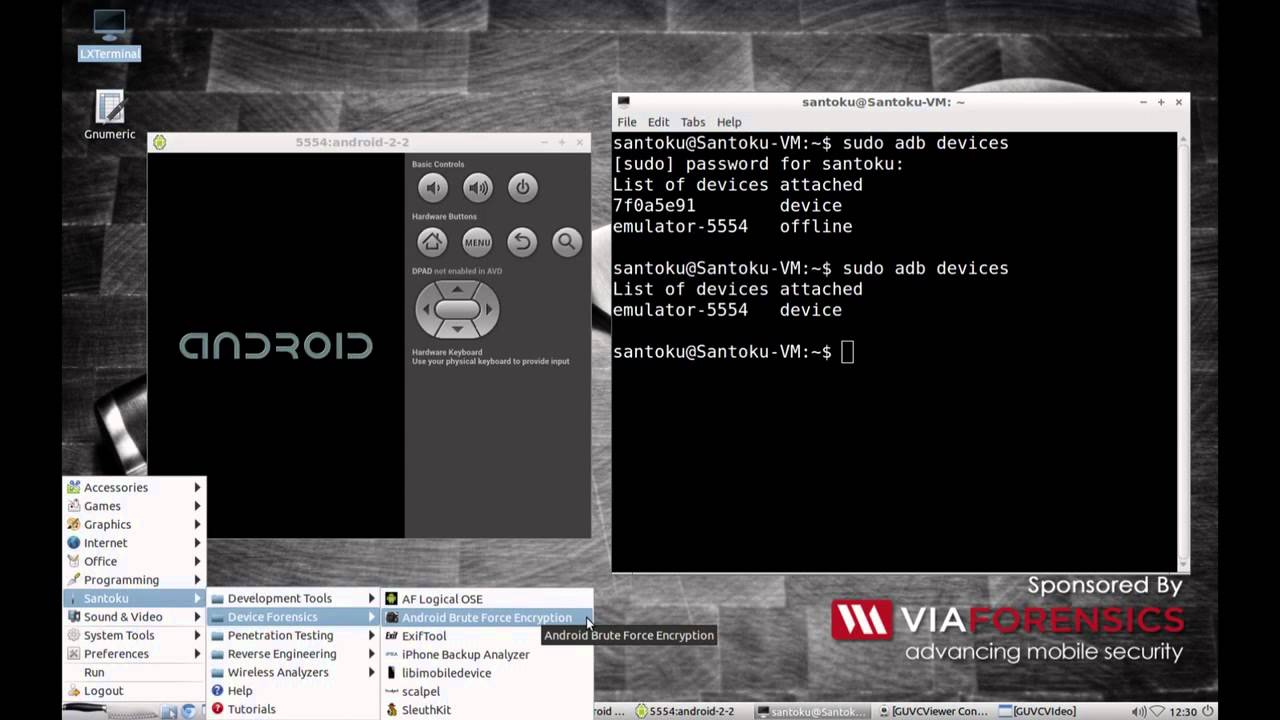

Santoku Linux. This is one of the Android app security testing tools created specifically to check the apps for vulnerabilities. All the necessary applications for hacking already preinstalled.

Android App Security Testing Step by Step

2018 OWASP statistics on security bugs occurrence provides a list of vulnerabilities to pay attention to during mobile app audit. That’s what today’s Android app security testing checklist will look like:

We won’t analyse the categories in a numerical order but move straight to M9 – Reverse Engineering, since the penetration test begins with it.

M9 — Reverse Engineering

Reverse engineering of mobile code is common. This is a simple and unauthorized analysis of:

- Application source code

- Libraries

- Algorithms

- Tables, etc.

To obtain an app’s source code, you need to upload an installation file (APK) to Santoku Linux, open the console, and execute easy commands.

Run the command unzip -d diva-beta base.apk. As you might guess, it unzips the application and extracts the files in a folder we’ve called diva-beta.

Next, open this folder and run the command d2j -dex2jar classes.dex. It will decompile the code this file contains. If we open the file without decompilation, we’ll get some mojibake. After running the command, a new file classes-dex2jar.jar will appear in the folder. It will contain a normal app source code suitable for reading by humans.

To open this file and begin to study the app code, we need a Jadx application. It is also installed on Linux distribution. Run the command jd-gui classes-dex2jar.jar.

Then, Jadx will open and display the source code. We will be able to understand all the shortcomings and find some vulnerabilities.

M1 — Improper Platform Usage

And now let’s bounce over to the M1 category. M1 covers improper use of the operating system features or platform security measures. These things happen often and can have a significant impact on vulnerable applications.

Since we already have the app with source code, we’ll study one of the APK activities using the previous vulnerability.

We can see that a developer used logcat for the app debugging to understand the errors in this field. When compiling the application into a release build, someone forgot to remove the debug command.

What does this mean for app users? If there’s any error or warning, an action will be logged. When a user fills out a form (let’s say, for accepting card data), this data will flash in the application logs if a user makes a mistake or receives a warning when filling out. As you can guess, a hacker can gain access to the logs.

Find more details in this video.

M2 — Insecure Data Storage

This risk on the OWASP list informs the development community of the insecure data storage on a mobile device. A malicious user can gain physical access to a stolen device or log into it using malware.

In case of physical access to a device, a hacker can easily open the file system after connecting the device to a computer. Many freeware programs allow a person to view directories and personal data.

So remember two things:

- Confidential in-app data must be encrypted

- Apps can share data with other apps

Let’s take, for example, the app registration form.

You register as a new user. A developer receives your data and stores it unencrypted in a public folder. Other applications installed on your phone can access it. Yes, your data leaks this easily. For more details, check out the video.

M3 — Insecure Communication

M3 is another common risk mobile app developers forget about. Data flows to and from a mobile app via mobile traffic or Wi-Fi. If this transfer isn’t protected, violators can disclose users’ personal information. Hackers intercept user data on a local network through a compromised Wi-Fi network. They can connect through routers, cell towers, proxy servers, or use malware. Some of user data requests to the server pass through HTTP protocol instead of HTTPS.

Here’s an example of how to exploit this vulnerability. A penetrator creates a compromised Wi-Fi network. A user connects. Then, the man in the middle begins to analyze all the traffic that flows through this compromised network.

A hacker can intercept user data sent to the server via the HTTP protocol and access the credentials. Below are the examples of good and poor ways to transfer data. You can also watch a video about traffic interception.

Poorly managed transfer:

Data should be encrypted. Even if an attacker connects to the same network you do and starts intercepting traffic, at least they won’t see the information openly. We will complicate the data theft as shown in the picture below.

M5 — Insufficient Cryptography

Okay, we have applied the encryption discussed in the previous section. But if we use weak encryption/decryption processes or make errors in the algorithms that run them, user data will become vulnerable again. There are three ways attackers exploit cryptographic problems:

- Gain physical access to a mobile device

- Monitor network traffic

- Use malware to access encrypted data

To understand what methods developers use to encrypt data, we need to look at the source code we already have.

In the picture above, we can see that a developer has applied the MD5 hash method. This is one of the easiest methods that shouts, “Break me now!”

When intercepting a request from a user, we will see data seemingly encrypted.

However, if we open an online decoder and paste the hash there, we’ll see a real user password.

M4 — Insecure Authentication

Poor authentication schemes allow an attacker to anonymously perform any user actions in a mobile app or on a server this app uses. Weak app authentication is quite common issue due to the input form factor of a mobile device.

The form factor strongly recommends using short passwords that are often based on four-digit PIN codes. Authentication requirements for mobile applications may differ significantly from traditional web authentication schemes, where users are expected to be connected to the network and authenticate in real-time.

Once an intruder understands how vulnerable the authentication scheme is, they fake or bypass authentication by sending requests to the server to process the mobile app, without using the latter at all.

For instance, an attacker can use some kind of an application analyzer – let’s say, Burp Suite. It is enough to analyze what pages this application has.

Let’s take a look at an authorization request. Here’s the data sent to the server. An abuser simply tries to obtain information from the server using the original info from the request. They go through the allocated places to achieve a positive result of unauthorized access to someone’s data.

In this request, you can:

- Pay attention to URLs

- Pay attention to the user type

- Try to replace the token by choosing the right one (with access to admin functions), etc.

M6 — Insecure Authorization

M4 risk is often confused with M6 since both relate to user credentials. M6 is about using authorization to log in as a legitimate user. M4 is a case when an attacker tries to bypass the authentication process by logging in as an anonymous user.

As soon as a meddler deceives the app’s security mechanism and gains access to the application as a legitimate user, they aim to gain administrative access. By picking requests, a hacker may stumble upon administrator commands.

Attackers typically use botnets or malware to exploit authentication vulnerabilities. The result of this security breach is an opportunity for a penetrator to perform binary attacks on a device offline.

It is possible to use Burp Suite for the search, too. In this case, a person tries to fulfill the admin requests as an ordinary user. See vulnerability M4.

M7 — Client Code Quality

The risk of M7 arises from poor or inconsistent coding practices, where each member of the development team adheres to different coding practices and creates inconsistencies in the final code.

M7 isn’t that threatening to app security. Despite the prevalence, this risk area is difficult to detect. It’s not easy for hackers to learn the patterns of bad coding. This process often requires complicated manual analysis.

Due to poor coding, however, a user may experience slower processing of requests and the inability to correctly download the necessary information.

The story of WhatsApp is a good example. WhatsApp engineers discovered the possibility of buffer overflows by sending a specially created series of packages. It wasn’t necessary to answer a call, and an attacker could execute arbitrary code. It turned out that the vulnerability was used to install spyware on devices.

You should not use functions that can overflow the buffer, like this:

M8 — Code Tampering

Not much to tell here, everything is rather clear. Just don’t download APK apps from third-party resources. Hackers prefer to falsify app code, as it allows them to get unlimited access:

- To other applications on your phone

- To user behavior

In M9 we did reverse engineering of the application so we know the source code, remember? Now we can alter it (fill in some kind of worm that can access data from other apps), recompile, and put the APK on some website with an “available for free” note 🙂

M10 — Extraneous Functionality

Before the application is ready for use, the development team often stores code in it to have easy access to the internal server. This code doesn’t affect the app’s operation in any way. However, when a malicious user finds these hints, it’s kind of trouble for developers. What if this code features admin credentials?

Let’s take, for example, a page where you need to enter a key to gain access to important data. We’ll study how this page looks under the hood – as the code.

We’ll see that here a developer left a hint on what to enter to gain access to the data we need. The video is here.

Wrapping Up

Now you know:

- About OWASP Mobile Top 10

- What tools to use to search for vulnerabilities

- What programs can help practice finding vulnerabilities

By gaining new testing skills, you can become a more valuable employee and take on more responsibilities. When management notices your progress in testing mobile app security testing services, you’ll certainly get a reward – a salary review, for example. You can become a security specialist, and there is a big shortage of those in the market.

Just remember, the information above is for learning! You can only use it in your projects with the permission of the copyright holder.