Many teams believe that the software should only be tested after it has been completed. This opinion comes from the Waterfall software testing methodology – an outdated process that brings about more problems than it solves.

In most cases, where the distinctive levels of software testing are overlooked, the software ends up having more bugs which are costlier to fix than if they were found earlier.

This means more work for developers and more costs for the company, as it has to cover extra hours spent on repairs.

In the US alone, the cost of software bugs is projected to be about $2.8 trillion per year. But this isn’t all of the problem. Imagine what might happen, in the event of software failures that affect not only the finances of consumers but also their lives.

Here’s one case example.

Approximately 300,000 patients with heart disease received the wrong medication in 2016. The hospital’s clinical computer had a SystemOne error that had been left unexposed for a long time. This led to a prolonged miss-assessment of the risk profiles of these patients. Undermanned patients developed stroke and other health complications while overtreated patients experienced serious side effects due to unnecessary dosing.

Extensive testing can potentially prevent such critical errors and reduce the cost of debugging. The cost of fixing bugs after the software launch could go up to $16,000, but it costs only $25 if found during the development stage. However, the big question is still, how can software testing be carried out correctly?

Ideally, different levels of software testing should be performed during and after the development process. At UTOR, we make use of Agile testing. In fact, according to BetaNews, 97% of companies, including IBM, Microsoft, Cisco, AT&T, are using Agile technology for both development and testing.

Contrary to Waterfall, the use of Agile ensures continuous testing during the building of the software. This is a software testing process that allows seamless interaction between the customer, developers, and the testers from when the first code of the software is written.

| AGILE | WATERFALL |

| Teamwork and Progressive Agreement | Over Protracted Documentation and Planning |

| User-focused Software | Over Process-focused Software |

| Customer Satisfaction and Strong Sense of Ownership | Over Little or no Customer Interaction |

| Adjustments and Changes | Over Rigidity |

Essentially, each stage of the software development life cycle undergoes a corresponding testing process.

If you want your software to operate without any hindrance, you must conduct tests at each of these stages.

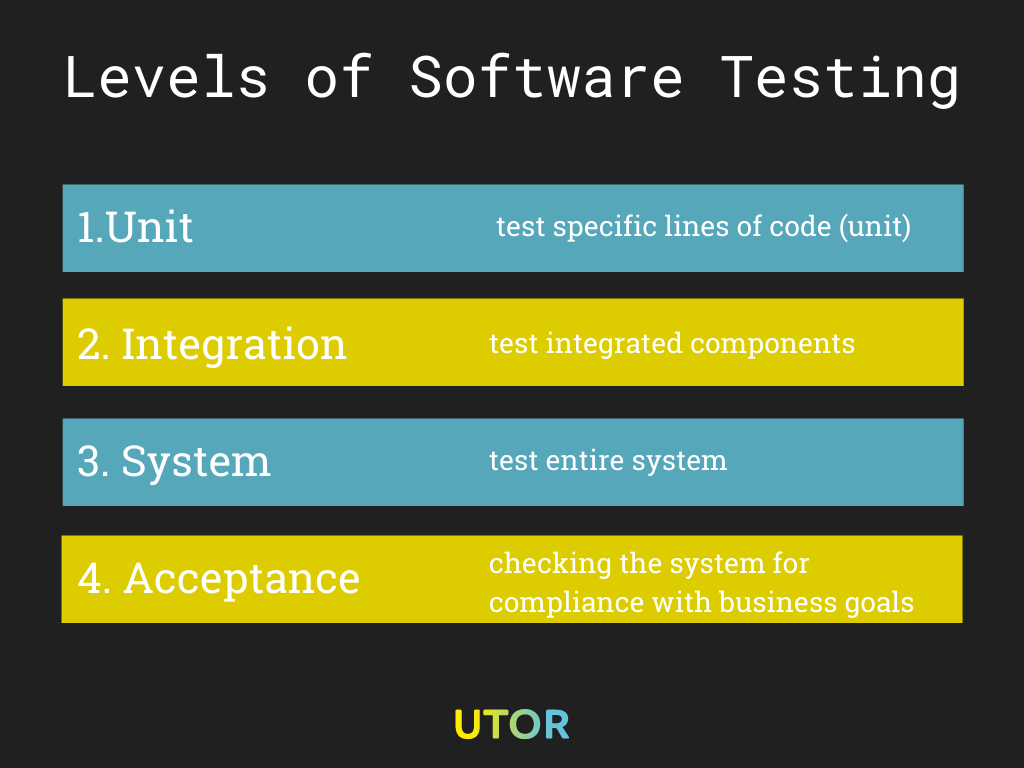

There are 4 main levels of software testing that must be carried out before the software is launched. These tests can be carried out in-house by developers in an organization or outsourced to expert software testers like us.

They include:

- Unit or first level test

- Integration or second level test

- System or third level test

- Acceptance or fourth level test

The illustration shows the 4 levels of software testing, from testing individual units to testing integrated units to testing the entire system, and to the final level, where you check the acceptability of the entire system.

Unit Testing

This is ideally our first level of testing software. How does it work? Here, specific lines of code, distinct functionalities, and desired procedures are isolated and tested. These lines of code, functionalities, and procedures are termed software units because they are combined to make up the software. They can also be referred to as components of the software.

For example, a line of code in a “calculator software” can have the function of implementing “addition,” while another line of code implements “multiplication,” etc. These addition and multiplication functions and other functions of the calculator software must be individually tested to ensure that the calculator operates flawlessly. You do not want to start a calculator that implements a division when users click on the add sign.

Unit testing is a process that mostly involves testing the internal workings of the software. It’s easy and quick to do because it deals with the software unit by unit, not as a whole.

Who Performs a Unit Test?

Unit testing should be done by the software tester(s).

Integration Testing

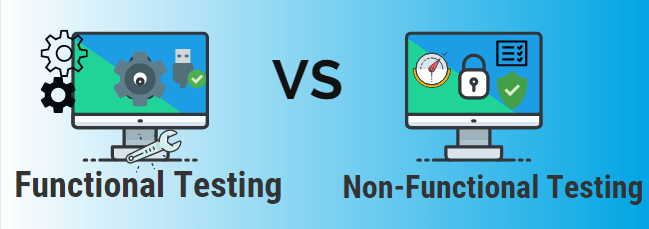

This testing level involves combining all the components that make up the software and testing everything as a whole instead of individually as done during unit testing. Also, from this level, tests can be split into functional and non-functional types. Why is integration testing needed?

First, the integrated codes could have been written by different developers, and need to be tested to ensure their correctness. By testing, we can identify and correct inter-working defects, simultaneous operation defects, parallel operation defects, etc.

Simply put, it helps us ascertain how well the units work together, and the condition of the interfaces between each of them. For example, we could verify if the following conditions from the checklist were met:

- If communication between the systems is carried out correctly

- If linked documents can run smoothly on all platforms

- Whether security specifications work during communication between systems

- Whether the software can withstand network breakdowns between the web servers and app servers

The condition of an interface may be poor, such that it might take a long time for the software to switch between functions. A good interface switches between functions immediately the user initiates it.

Sometimes, a line of code may work properly when tested alone. However, when combined with other lines of code to achieve the desired function, an error can occur, indicating that the integration of one or more lines of code into the rest of the code was unsuccessful.

Unit testing can prove that everything works as expected, while integration testing can prove otherwise. This is why we ensure that all recommended levels of software tests are carried out rigorously, to avoid potential pitfalls.

There are 4 main approaches we use to carry out integration testing for our clients. They include:

- Top-Down Integration testing: The rule of thumb when applying the top-down approach is to test higher units before checking the lower levels. In other words, the performer has to test from top to bottom.

- Bottom-Up Integration testing: On the other hand, in bottom-up integration, the performer tests in the opposite direction ― from bottom to top. You ultimately try lower units and step up to the higher components.

- Big-Bang Integration testing: Big bang integration testing refers to when all elements are tested concurrently in a single phase. The big-bang model is also applicable when validating small applications, where the top-down and bottom-up models can be bypassed. The modules are all programmed, combined, and tested together.

- Mixed (sandwiched) Integration testing: Available modules are being tested, whether they belong to high or low code lines. This means that no priority is given to either component by the performer. Unresponsive modules are simulated to determine how well the available code will work when the coding is completed and combined.

Who Performs an Integration Test?

Integration testing should be handled by the tester(s).

System Testing

System testing has to do with verifying the required operations of the software and its compatibility with operating systems. In other words, we test both the technicalities and the business logic of the software; we run functional tests to check what the various functions of a system do, and non-functional tests to check how those functions work.

For instance, in functional testing, we check if a login feature responds when the user enters a password. But in non-functional testing, we check how long it takes the user to log in after password entry.

Read more about functional and non-functional tests.

To be done effectively, it has to be tested by external software testers that have absolutely nothing to do with the development of the software. System testing helps to determine how compatible the software is with the operating systems for which it has been designed.

Thus, all the software’s completed versions are tried out on all the possible operating systems that users may have. There are cases where the Android version of an application may be functioning correctly, but the iOS version may have some issues.

System testing exposes such errors, and developers can detect and correct them before they’re launched. System testing, in addition, ascertains how good the design of the software is. The internal code can work adequately, but the outward design might have some operational issues. This is why system testing is essential. Hence, all design abnormalities can be detected and corrected.

The general behavior of the software is also another aspect that system testing helps to authenticate. The software may be appropriately developed and yet malfunction, due to various system specifications.

System testing helps identify any abnormal behavior and helps organizations to outline the software’s best system specifications. Failure to gather these requirements can lead to scope creep. This also means that the project can accrue more costs, longer duration, and a need for extra resources. The system too, will not live up to its promise of delivering business value for your organization

The identified system specifications are then relayed to the end-users as requirements that their devices must meet or exceed. Today, every authentic software you buy comes with operating system minimum requirements.

Who Performs a System Test?

System testing should be carried out by a QA team of software testers.

Acceptance Testing

This level of software testing is similar to the system testing, but here, the test is carried out by some selected end-users. This is the only software testing stage that is carried out by users. This stage determines if the software is finally ready to be launched to the general public.

All the selected users give their various opinions about the operation of the software; they let the organization know whether the software meets their diverse requirements, and recommend areas that may need to be improved upon. Acceptance testing can also be referred to as User Acceptance Testing.

Though each organization may conduct acceptance testing using its own specifics — who and where to test — it is vital that we select users with different demographics, operating systems, and unique past experiences of using similar software.

There are two types of Acceptance Testing:

- Alpha Testing

- Beta Testing

Alpha Testing:

Alpha testing is carried out by selected users but together with an internal team of developers who control the testing environment and try to mimic realistic conditions during testing. Here, the selected users are given passwords or access keys that will allow them to log in and out of the developer’s platform without any hindrance to use and test the developed software/ application. During alpha testing, minor errors can still be found and fixed.

Beta Testing:

Beta testing is carried out by the selected users on their own devices and operating systems. Here, the finished software is sent to the selected users to use it and test it over a while, before giving their various feedback. At the beta level, the software is expected to be void of defects, run perfectly, and meet the users’ needs.

Who Performs an Acceptance Test?

The end-user(s).

How to Perform the Different Levels of Software Testing in the SDLC

In conclusion, all the levels of software testing are essential and have to be completed before the application is launched. Meanwhile, in order to see the bigger picture, you may want to check out the types of software testing.

At UTOR, we ensure that the most experienced developers test your software. During acceptance testing, we carefully select users who will give genuine opinions about the design and operation of the software.

It is crucial to start testing your software as soon as the first code is written to eliminate any error or abnormality that may arise. Also, be aware that testing continues even after the software has been launched to implement future updates.

If you’re building software for your business and need to execute proper testing or you simply have some questions about software testing, contact us. We’ll walk you through the entire process, and provide detailed insights that you’ll need.